With the surge in attack volume and the increasing sophistication of cyberattack methods, today’s cybersecurity infrastructure faces increasingly complex challenges. As a mitigation strategy, the integration of Deep Learning (DL) into Intrusion Detection Systems (IDS) has revolutionized detection capabilities, particularly in identifying zero-day attacks with a high degree of accuracy. However, this performance advantage carries serious consequences in the form of the “black-box” problem. As emphasized by Neupane et al. (2022) and Capuano et al. (2022), DL models often fail to provide rational explanations for why certain network traffic is classified as a threat.

This inability creates operational and psychological barriers for security analysts. Without transparency, automated decisions are difficult to verify, potentially leading to distrust or even disregard of critical security alerts. Therefore, transparency is no longer an optional feature but a fundamental requirement to ensure the effectiveness of IDS in production environments.

To address model opacity, recent research focuses on the adoption of post-hoc and model-agnostic Explainable AI (XAI) techniques. The two most prominent methods in the literature are SHAP (Shapley Additive Explanations) and LIME (Local Interpretable Model-agnostic Explanations). Onyilo and Uzuegbu (2025) and Gaspar et al. (2024) demonstrate that these methods are highly effective when integrated with complex architectures such as Multi-Layer Perceptrons (MLPs) or hybrid CNN-LSTMs.

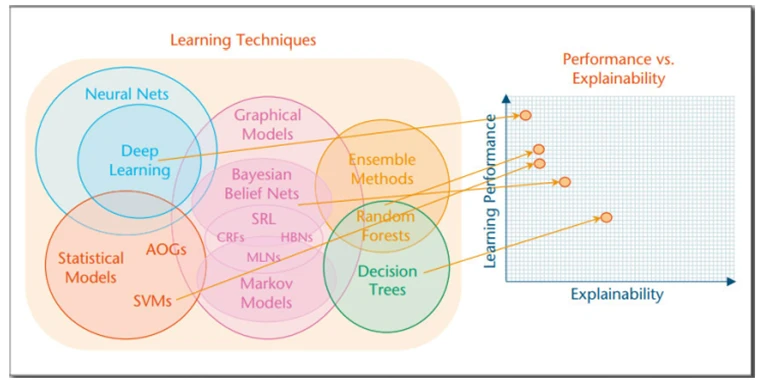

Machine Learning Techniques Map and Performance-Explainability Relationship by Gaspar et al. (2024)

Through feature contribution mechanisms, XAI enables the identification of specific attributes—such as source bytes, flow duration, or the sequence of system calls—that serve as the primary triggers for security alerts. These findings are supported by Udofot et al. (2024), who demonstrated that such explanatory visualizations significantly enhance analyst efficiency in Security Operations Centers (SOCs) by providing clear context behind each detection.

The integration of XAI into IDS goes beyond mere technical functionality; it is a strategic step toward an ethical and responsible AI ecosystem. Three key opportunities have been identified from this implementation:

- The Explainable IDS (X-IDS) framework aligns with global standards such as the EU AI Act and NIST guidelines. These standards demand full accountability for AI-based systems, particularly those used in critical infrastructure (Onyilo & Uzuegbu, 2025).

- Transparency enables developers to understand the root causes of model failures, such as false positives. With this information, feature refinement can be performed more precisely to enhance model robustness.

- XAI transforms the role of AI from an automated machine to a collaborative partner. AI’s ability to provide “reasoning” for its decisions fosters human security experts’ trust in automated systems.

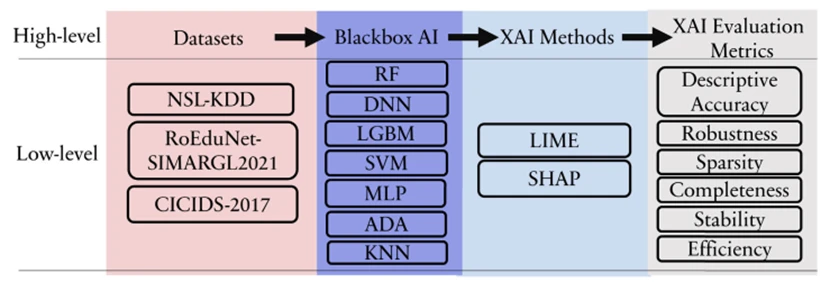

Architecture of the XAI Evaluation Framework for Machine Learning Models in Cybersecurity: Arreche et al. (2024)

- Although promising, the study’s findings reveal several fundamental challenges that remain unresolved and require serious attention: Adversarial

Attack Risks: Capuano et al. (2022) and Arreche et al. (2024) warn that XAI can be a “double-edged sword.” Sophisticated attackers can exploit XAI explanations to map the internal logic of an IDS, then design counterattacks that manipulate features to remain undetected. - Computational Overhead: The explanation generation process demands additional resources. In high-speed networks processing millions of packets per second, providing real-time explanations without compromising detection performance poses a significant technical challenge.

- Standardization and Stability: Arreche et al. (2024), through the E-XAI framework, highlight the instability of XAI explanations. Minor disruptions in input data can yield vastly different explanations, which could potentially confuse analysts and reduce the system’s overall reliability.

The evolution toward Explainable Intrusion Detection Systems (X-IDS) is an urgent need in an increasingly automated cybersecurity ecosystem. Current research has demonstrated that transparency can be achieved without sacrificing accuracy, with performance reaching >98% on benchmark datasets. However, significant challenges remain regarding resilience against attack manipulation and the standardization of explanation quality.

Future development must focus on creating models with intrinsic interpretability and strengthening security within the XAI layer itself. This aims to ensure that explanation mechanisms do not become new vulnerabilities for hackers but remain a pillar of trust in cybersecurity defense.

References:

Arreche, O., Guntur, T. R., Roberts, J. W., & Abdallah, M. (2024). E-XAI: Evaluating black-box explainable AI frameworks for network intrusion detection. IEEE Access, 12, 23971–23988.

Capuano, N., Fenza, G., Loia, V., & Stanzione, C. (2022). Explainable artificial intelligence in cybersecurity: A survey. IEEE Access, 10, 93576–93600.

Gaspar, D., Silva, P., & Silva, C. (2024). Explainable AI for intrusion detection systems: LIME and SHAP applicability on multi-layer perceptron. IEEE Access, 12, 30161–30175.

Neupane, S., Ables, J., Anderson, W., Mittal, S., Rahimi, S., Banicescu, I., & Seale, M. (2022). Explainable intrusion detection systems (X-IDS): A survey of current methods, challenges, and opportunities. IEEE Access, 10, 112392–112415.

Onyilo, J., & Uzuegbu, V. C. (2025). Explainable artificial intelligence intrusion detection system: Improving transparency and trust in cybersecurity. Nature Journal of Emerging Sciences, Technologies, and Innovations, 1(1), 85–103.